Observability Profile

Event architecture, correlation engine, detection patterns, and operational playbooks for agentic systems.

For SREs, SOC analysts, detection engineers, and platform reliability engineers responsible for monitoring, detecting threats in, and responding to incidents involving autonomous AI agents in production.

The key question this profile answers: How do I see what my agents are doing and respond when they misbehave?

Scope boundary: This profile covers runtime operations — monitoring, detection, correlation, and response. Infrastructure deployment belongs to the Platform Profile. Threat modeling belongs to the Security Profile. Regulatory evidence generation belongs to the GRC Profile.

The Observability Challenge

Agent systems are not applications. Applications do what they're told. Agents reason, choose tools, take actions, delegate to other agents, and modify their own behavior over time. Every one of these capabilities introduces failure modes and attack vectors that infrastructure observability — latency, errors, throughput — cannot see.

The observability challenge for agentic systems has three dimensions:

-

Agent events are not infrastructure events. Infrastructure observability tells you if the system is running. Agent observability tells you if the system is behaving within governance boundaries. "Ring 0 executed in 3 seconds" is infrastructure. "Ring 1 verified the output, Ring 2 authorized the action, and the provenance chain is complete" is agent observability.

-

Three detection domains share one event stream. Quality failures, security incidents, and governance violations often share the same event evidence. A quality degradation pattern might be a security attack. A compliance anomaly might indicate a compromised agent. Separating these into different tools loses critical correlation signal.

-

Correlation, not just collection. Raw event collection is necessary but not sufficient. The value is in correlation: three agents all bypassing quality gates in the same week. A gradual trust escalation followed by an anomalous high-stakes output. Cost per execution trending up 40% over a month. The correlation engine transforms a log store into an intelligence system.

The SIEM Pattern for Agents

Traditional SIEM (Security Information and Event Management) ingests security events from infrastructure and applies correlation rules, playbooks, and forensic investigation to detect and respond to threats.

Agentic Observability applies the same pattern, but with different event sources:

| Traditional SIEM | Agentic Observability |

|---|---|

| Firewall logs, endpoint events | Ring boundary events, tool calls, gate decisions |

| Network authentication | Agent identity verification, delegation chains |

| File integrity monitoring | Provenance chain integrity, evidence tampering |

| User behavior analytics | Agent behavioral baselines, trust trajectories |

| Incident response playbooks | Governance response playbooks (quarantine, trust degradation, containment) |

The threats are different too. Not unauthorized network access — but ungoverned autonomous action, adversarial manipulation, quality degradation, policy violations, and trust manipulation.

The architecture is the same: structured event ingestion → correlation rules → detection → response playbooks → forensic investigation.

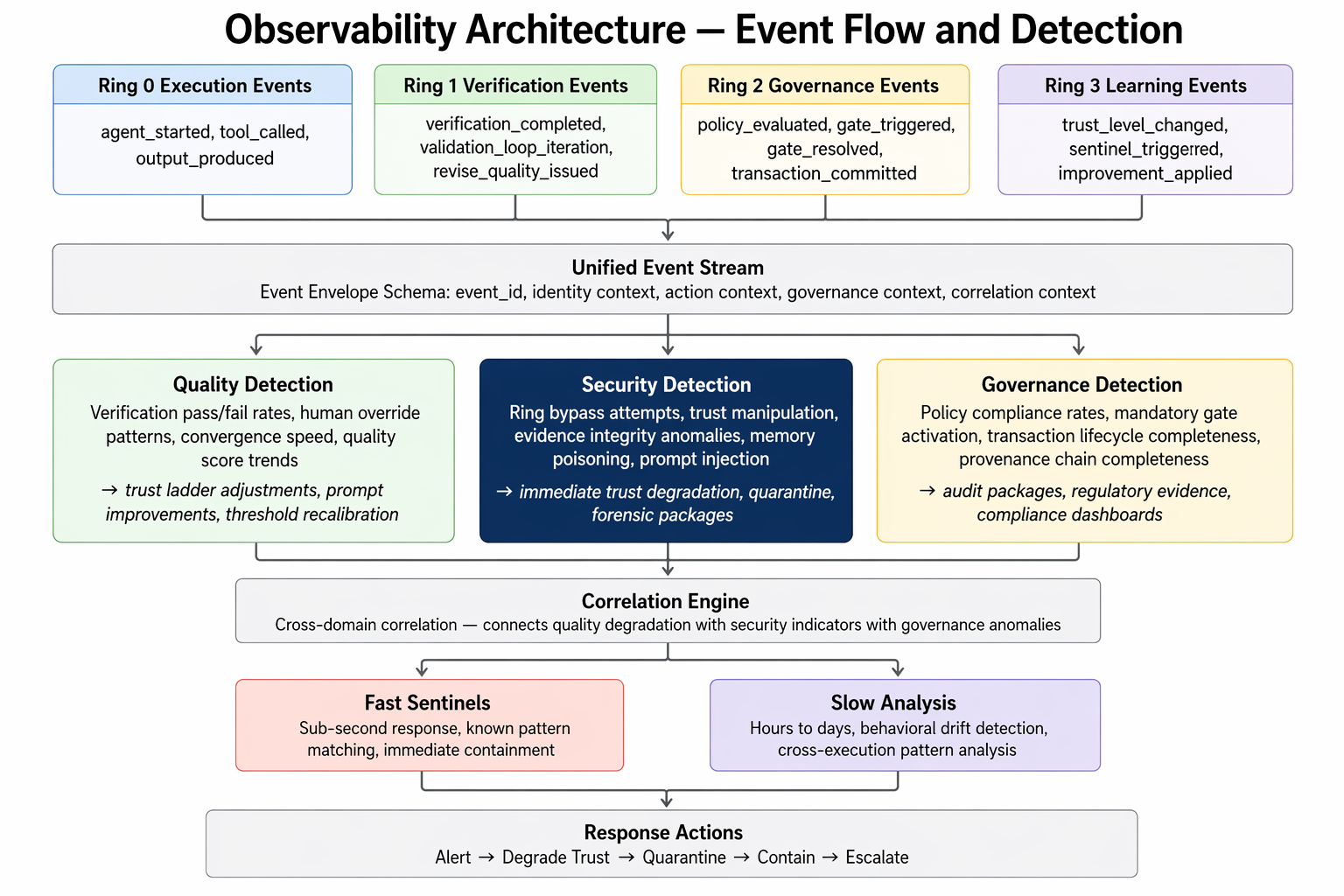

Three Detection Domains, One Event Stream

The unified event stream feeds three detection domains. Separating them into different tools loses critical correlation signal.

Quality Detection (Ring 3 Intelligence)

Monitors:

- Verification pass/fail rates by agent, task type, document type

- Human override patterns (which fields, which directions, which agents)

- Convergence speed (validation loop iterations to pass)

- Quality score distributions and trends

- Cost and latency per ring, per agent, per task type

Produces:

- Trust ladder adjustments (empirical, data-driven)

- Prompt improvement recommendations (from override patterns)

- Threshold recalibration signals (from false positive analysis)

- Anomaly alerts (sentinel fast path for immediate trust degradation)

Security Detection (Cross-cutting)

Monitors:

- Ring bypass attempts (outputs without expected verification events)

- Trust manipulation trajectories (building trust on low-stakes for high-stakes exploitation)

- Evidence integrity anomalies (source authentication failures, provenance tampering)

- Memory poisoning indicators (compromised data entering Ring 3)

- Prompt injection patterns (adversarial content altering behavior)

- Identity anomalies (delegation chain inconsistencies, unexpected model changes)

- Cross-pipeline poisoning (compromised Pipeline A output feeding Pipeline B)

Produces:

- Immediate trust degradation on anomaly detection (sentinel fast path)

- Quarantine of affected outputs pending investigation

- Forensic investigation packages (event timeline, provenance walkback, identity trace)

- Incident response playbook triggers

- Security posture dashboards

Governance Detection (Compliance)

Monitors:

- Policy compliance rates across all Ring 2 evaluations

- Mandatory gate activation (was every required gate triggered?)

- Transaction lifecycle completeness (pre-commit → commit → confirm)

- Approval freshness (stale approvals relative to context changes)

- Provenance chain completeness (gaps in the evidence → decision trace)

Produces:

- Audit packages: complete governance evidence for a scope (time range, agent, case type)

- Regulatory evidence: documentation for EU AI Act, NIST AI RMF, ISO 42001

- Compliance dashboards: policy violation rates, gate compliance, provenance coverage

Event Architecture

The Event Envelope

Every material agent action emits an event with a common schema. The envelope is the atomic unit of agentic observability.

Event Envelope:

event_id: Unique event identifier

timestamp: When the event occurred

# Identity context (#14 — travels with every event)

actor_type: agent | human | system

actor_id: Specific agent instance or human

actor_version: Agent configuration version / config hash

model_id: Which model, version, provider

delegation_chain: Who authorized this actor, under what authority

tenant_id: Whose data scope

# Action context

action_type: What happened (from event taxonomy)

target_type: What was acted upon

target_id: Specific target

previous_state: State before the action

new_state: State after the action

# Governance context

ring: Which ring (R0, R1, R2, R3)

deployment_mode: wrapper | middleware | graph_embedded

policy_reference: Which policy rule was evaluated

gate_type: mandatory | adaptive | none

# Quality context

confidence: Confidence score (if applicable)

provenance_link: Link to the provenance chain node

# Correlation context

case_id: Decision case / assessment / workflow

run_id: Specific execution run

session_id: Session context

parent_event_id: Causal link to triggering eventEvent Taxonomy

Events classified by ring, mapping to the three detection domains:

Execution events (Ring 0):

agent_started,agent_completed,agent_failedtool_called,tool_returned,tool_failedoutput_produced,output_validated_schema

Verification events (Ring 1):

verification_started,verification_completedvalidation_loop_iteration,validation_loop_converged,validation_loop_exhaustedadversarial_critique_started,adversarial_critique_completedfinding_produced(severity, field, finding_type)revise_quality_issued

Governance events (Ring 2):

policy_evaluated,policy_passed,policy_violatedgate_triggered(gate_type: mandatory | adaptive;decision_id: references the newly-created pending GDR)gate_resolved(resolution: approve | reject | modify | defer | escalate;decision_id: references the now-resolved GDR)provenance_recordedrevise_context_issuedtransaction_pre_commit,transaction_committed,transaction_confirmedapproval_granted,approval_expired,approval_invalidated(each carriesdecision_idreferencing the underlying GDR)

Learning events (Ring 3):

trust_level_changed(direction: increased | decreased | reset)sentinel_triggered(fast path anomaly detection)improvement_recommended,improvement_applied,improvement_rolled_backmemory_written,memory_queried,memory_pruned

OpenTelemetry Alignment

AGF event architecture aligns with the OpenTelemetry GenAI semantic conventions. Key mappings:

| OTel GenAI Attribute | AGF Event Field | Notes |

|---|---|---|

gen_ai.system | model_id.provider | Model provider |

gen_ai.request.model | model_id.model | Specific model |

gen_ai.usage.input_tokens | Ring 0 execution event | Per-call token accounting |

gen_ai.operation.name | action_type | What the agent did |

AGF extends OTel with governance-specific fields (ring, gate_type, policy_reference, delegation_chain) that the base GenAI conventions don't cover.

Correlation Engine

Raw events are necessary but not sufficient. The correlation engine is where events become intelligence.

Correlation Rule Examples

Trust manipulation detection:

DETECT: trust_escalation_then_anomaly

PATTERN:

- trust_level_changed(direction: increased) × N times over T days

- followed by: high_stakes_action OR policy_violated

THRESHOLD: N ≥ 3, T ≤ 14 days

RESPONSE: immediate trust degradation, forensic investigation triggerRing bypass detection:

DETECT: verification_gap

PATTERN:

- output_produced in Ring 0

- NO verification_completed for same run_id within T seconds

- output released

THRESHOLD: T = 30s

RESPONSE: quarantine output, alert security, flag for auditCross-pipeline poisoning:

DETECT: cross_pipeline_contamination

PATTERN:

- agent_failed OR policy_violated in Pipeline A

- output from Pipeline A used as input in Pipeline B within T hours

- Pipeline B produces output without verification_completed

RESPONSE: quarantine Pipeline B outputs, trigger forensic walkbackApproval fatigue exploitation:

DETECT: rubber_stamping

PATTERN:

- human reviewer: approval rate ≥ 95% over last N decisions

- OR: median approval time < 10 seconds

- AND: at least one high_stakes_action in approved set

RESPONSE: flag reviewer, escalate to second reviewer, alert managementDual-Speed Architecture

The correlation engine runs at two speeds:

Fast path (sentinels) — sub-second:

- Known-pattern matching against pre-defined threat signatures

- Triggers Security Response Bus for pre-authorized containment actions

- Outputs: immediate trust degradation, quarantine signals, real-time alerts

Slow path (analysis) — hours to days:

- Cross-execution pattern analysis across full event history

- Behavioral drift modeling (how is this agent's behavior changing over time?)

- Memory evolution tracking (what has Ring 3 learned, and is it drifting?)

- Outputs: trend reports, Trust Ladder calibration signals, policy improvement recommendations

Operational Playbooks

Playbook: Behavioral Anomaly Detected

Trigger: Sentinel detects behavioral deviation exceeding threshold.

- Immediate (automated): Trust level decremented. Outputs from current session quarantined pending review.

- Within minutes (SOC): Review sentinel alert. Check provenance chain for anomalous action. Confirm quarantine scope is correct.

- Investigation: Pull full event timeline for affected agent. Check for trust manipulation trajectory (slow path correlation). Identify entry point of anomaly.

- Containment decision: If confirmed attack → isolate agent, preserve forensic state, trigger incident response. If false positive → restore trust level, update sentinel threshold, document false positive.

Playbook: Provenance Chain Gap

Trigger: Governance detection flags output with incomplete provenance.

- Immediate (automated): Output flagged, release blocked pending investigation.

- Investigation: Identify which ring boundary produced the gap. Check for ring bypass pattern (verification_gap correlation rule).

- Root cause: Config error → fix and re-process. Active bypass attempt → escalate to security, treat as ring bypass incident.

- Resolution: Either complete provenance chain (if recoverable) or reject output and log the gap as a governance violation.

Playbook: Cross-Pipeline Contamination Suspected

Trigger: Cross-pipeline poisoning correlation rule fires.

- Immediate: Quarantine all downstream pipeline outputs that used upstream compromised output as input.

- Scope: Use provenance chains to trace all derivations of the contaminated output. Identify full blast radius.

- Investigation: Review upstream failure. Determine if contamination was adversarial or accidental.

- Remediation: Reject contaminated outputs. Re-process from last known-good checkpoint. Review cross-pipeline data flow policy.

Playbook: Human Oversight Fatigue

Trigger: Rubber-stamping detection fires for a reviewer.

- Immediate: Route pending approvals to a second reviewer. Do not alert the fatigued reviewer — this prevents manipulation.

- Review: Audit recent approvals by the flagged reviewer. Check if any high-stakes actions were waved through without adequate review.

- Intervention: If approvals were inadequate → re-review high-stakes decisions. Notify management.

- Prevention: Adjust approval queue rate limits. Implement mandatory cooling-off periods. Review cognitive load on this reviewer's queue.

Zero Trust Monitoring

Observability itself must operate under zero trust assumptions. The monitoring infrastructure is a high-value target.

Principles:

- Event integrity: Events are signed at emission. Correlation engine verifies signatures before processing. Tampered events are flagged, not silently dropped.

- Monitor the monitors: Ring 1 verification applies to the Intelligence layer itself. Behavioral anomalies in the monitoring system trigger escalation.

- Separation of evidence: Forensic evidence stores are append-only, write-once. The correlation engine reads but cannot modify.

- Dead-man's switches: If the correlation engine stops producing output for T minutes, automatic alerts fire — silence is itself a signal.

Observability Maturity Model

| Level | Name | Capabilities |

|---|---|---|

| 1 — Blind | No structured observability | Log files only, no structured events, no correlation |

| 2 — Visible | Basic event collection | Structured events at ring boundaries, basic dashboards, manual incident response |

| 3 — Monitored | Active detection | Behavioral baselines, sentinel fast path, governance compliance dashboards, documented playbooks |

| 4 — Intelligent | Correlated intelligence | Dual-speed correlation engine, cross-pipeline detection, Trust Ladder auto-calibration from observability data |

| 5 — Autonomous | Self-improving observability | Ring 3 drives threshold recalibration, correlation rules evolve from observed patterns, playbooks update from post-incident analysis |

For EU AI Act high-risk systems: Level 3 is the minimum for Art. 12 compliance. Level 4 is recommended for high-throughput or high-stakes systems where manual correlation is insufficient.

What This Is NOT

Agentic observability as described here is an architecture pattern, not a product. As of March 2026:

- No single platform implements the full stack. Commercial platforms (LangSmith, Arize, Braintrust, Helicone) cover quality monitoring. Security SIEMs (Splunk, Sentinel) cover security events. GRC tools cover compliance. The unified approach described here requires integration or custom implementation.

- Behavioral baselines require data. You cannot detect deviation until you have established what "normal" looks like. New agents have no baseline.

- Correlation rules require tuning. Generic rules produce false positives. Effective correlation requires domain-specific calibration.

- The EDR pattern for agents is early. Memory introspection and state inspection tooling for agentic systems is nascent. The pattern is clear; the tooling is immature.

Operations Checklist

Event collection:

- Structured event emission from all ring boundaries

- Common event envelope schema deployed

- Event signing at emission (integrity verification)

- Append-only forensic evidence store

Detection:

- Behavioral baseline established per agent (requires ≥2 weeks of data)

- Sentinel fast path configured with pre-authorized response classes

- Cross-pipeline correlation rules active

- Rubber-stamping detection configured for all human reviewers

Playbooks:

- Behavioral anomaly playbook documented and tested

- Provenance chain gap playbook documented and tested

- Incident severity classification defined

- Escalation paths to security and management documented

Governance evidence:

- Audit package generation tested (time range, agent, case type)

- Regulatory evidence export configured (EU AI Act, NIST)

- Compliance dashboard covering gate compliance, policy violation rates, provenance coverage

Zero trust monitoring:

- Dead-man's switches active on correlation engine

- Monitoring infrastructure itself subject to Ring 1 verification

- Evidence tamper detection configured

Related: Security Profile — the three-level security model and Security Response Bus that observability feeds. Platform Profile — deployment infrastructure and event emission points. GRC Profile — how observability evidence maps to regulatory requirements.